An earlier post addressed one of the more perplexing challenges to managing an analytic community of any size against the irresistible urge to cling to what everyone else seems to be doing without thinking carefully about what is needed, not just wanted. This has become more important and urgent with the breath-taking speed of Big Data adoption in the analytic community. Older management styles and obsolete thinking have created needless friction between the business and their supporting IT organizations. To unlock breakthrough results requires a deep understanding of why this friction is occurring and what can be done to reduce this unnecessary effort so everyone can get back to the work at hand.

There are two very real and conflicting views that we need to balance carefully. The first, driven by the business is concerned with just getting the job done and lends itself to an environment where tools (and even methods) proliferate rapidly. In most cases this results in overlapping and redundant expensive functionality. Less concerned with solving problems once, the analytic community is characterized by many independent efforts where significant intellectual property (analytic insight) is not captured and inadvertently placed at risk.

The second view, in contrast, is driven by the supporting IT organization charged with managing and delivering supporting services across a technology portfolio that values efficiency and effectiveness. The ruthless pursuit of eliminating redundancy, leveraging the benefits of standardization, and optimizing investment drive this behavior. So this is where the friction is introduced. Until you understand this dynamic be prepared to experience organizational behavior that seems puzzling and downright silly at times. Questions like these (yes they are real) seem to never be resolved.

– Why do we need another data visualization tool when we already have five in the portfolio?

– Why can’t we just settle on one NoSQL alternative?

– Is the data lake really a place to worry about data redundancy?

– Should we use the same Data Quality tools and principles in our Big Data environment?

What to Do

So I’m going to share a method to help resolve this challenge and help focus on what is important so you can expend your nervous system solving problems rather than creating them. Armed with a true understanding of the organizational dynamics it is now a good time to revisit a first principle that form follows function to help resolve and mitigate what is an important and urgent problem. For more on this important principle see Big Data Analytics and Cheap Suits.

This method knits together several key components and tools to craft an approach that you may find useful. The goal is to organize and focus the right resources to ensure successful Big Data Analytic programs meet expectations. Because of the content delivered believe I will just break this down into several posts, each building on the other to keep the relative size and readability manageable. This approach seemed to work with earlier series on Modeling the MDM Blueprint and How to Build a Roadmap so think I will stick to this method for now.

The Method

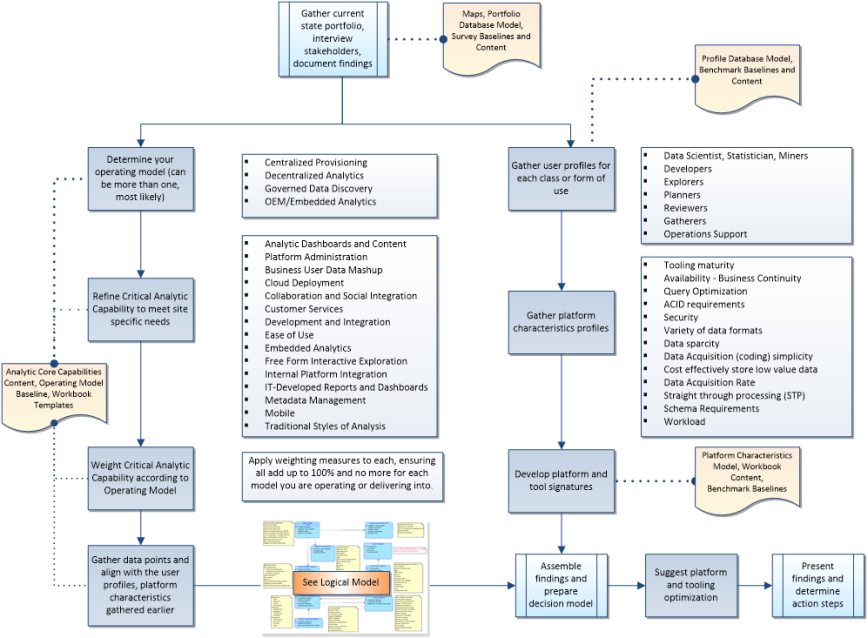

First let’s see what the approach looks like independent of any specific tools or methods. This includes nine (9) steps which can be performed concurrently by both business and technical professionals working together to arrive at the suggested platform and tooling optimization decisions. Each of the nine (9) steps in this method will be accompanied by a suggested tool or method to help you prepare your findings in a meaningful way. Most of these tools are quite simple; some will be a little more sophisticated. This represents a starting point on your journey and can be extended in any number of ways to create more refined uses to re-purpose the data and facts collected in this effort. The important point is all steps are designed organize and focus the right resources to ensure successful Big Data Analytic programs meet expectations. Executed properly you will find a seemingly effortless way to help:

First let’s see what the approach looks like independent of any specific tools or methods. This includes nine (9) steps which can be performed concurrently by both business and technical professionals working together to arrive at the suggested platform and tooling optimization decisions. Each of the nine (9) steps in this method will be accompanied by a suggested tool or method to help you prepare your findings in a meaningful way. Most of these tools are quite simple; some will be a little more sophisticated. This represents a starting point on your journey and can be extended in any number of ways to create more refined uses to re-purpose the data and facts collected in this effort. The important point is all steps are designed organize and focus the right resources to ensure successful Big Data Analytic programs meet expectations. Executed properly you will find a seemingly effortless way to help:

– Reduce unnecessary effort

– Capture, manage, and operationally use analytic insight

– Uncover inefficient tools and processes and take action to remedy

– Tie results directly to business goals constrained by scope and objectives

So presented here is a simplified method to follow to compile an important body of work, supported by facts and data to arrive at any number of important decisions in your analytics portfolio.

1) Gather current state analytics portfolio, interview stakeholders, and document findings

2) Determine the analytics operating model in use (will have more than one, most likely)

3) Refine Critical Analytic Capabilities as defined to meet site specific needs

4) Weight Critical Analytic Capability according to each operating model in use

5) Gather user profiles and simple population counts for each form of use

6) Gather platform characteristics profiles

7) Develop platform and tool signatures

8) Gather data points and align with the findings

9) Assemble findings and prepare a decision model for platform and tooling optimization

The following diagram illustrates the method graphically (click to enlarge).

In a follow-up post I will dive into each step starting with gathering current state analytics portfolio, interviewing stakeholders, and documenting your findings. Along the way I will provide examples and tools you can use to help make your decisions and unlock breakthrough results. Stay tuned…