Matching algorithms, the functions that allow data quality tools to determine duplicate records and create households, are always a hot topic in the data quality community. In a previous installment of the Data Governance and Data Quality Insider, I wrote about the folly of probabilistic matching and its inability to precisely tune match results.

Matching algorithms, the functions that allow data quality tools to determine duplicate records and create households, are always a hot topic in the data quality community. In a previous installment of the Data Governance and Data Quality Insider, I wrote about the folly of probabilistic matching and its inability to precisely tune match results.

To recap, decisions for matching records together with probabilistic matchers are based on three things: 1) statistical analysis of the data; 2) a complicated mathematical formula, and; 3) and a “loose” or “tight” control setting. Statistical analysis is important because under probabilistic matching, data that is more unique in your data set has more weight in determining a pass/fail on the match. In other words, if you have a lot of ‘Smith’s in your database, Smith becomes a less important matching criterion for that record. If the record has a unique last name like ‘Afinogenova’ that’ll carry more weight in determining the match.

The trouble comes when you don’t like the way records are being matched. Your main course of action is to turn the dial on the loose/tight control to see if you can get the records to match without affecting r…

Matching algorithms, the functions that allow data quality tools to determine duplicate records and create households, are always a hot topic in the data quality community. In a previous installment of the Data Governance and Data Quality Insider, I wrote about the folly of probabilistic matching and its inability to precisely tune match results.

Matching algorithms, the functions that allow data quality tools to determine duplicate records and create households, are always a hot topic in the data quality community. In a previous installment of the Data Governance and Data Quality Insider, I wrote about the folly of probabilistic matching and its inability to precisely tune match results.

To recap, decisions for matching records together with probabilistic matchers are based on three things: 1) statistical analysis of the data; 2) a complicated mathematical formula, and; 3) and a “loose” or “tight” control setting. Statistical analysis is important because under probabilistic matching, data that is more unique in your data set has more weight in determining a pass/fail on the match. In other words, if you have a lot of ‘Smith’s in your database, Smith becomes a less important matching criterion for that record. If the record has a unique last name like ‘Afinogenova’ that’ll carry more weight in determining the match.

The trouble comes when you don’t like the way records are being matched. Your main course of action is to turn the dial on the loose/tight control to see if you can get the records to match without affecting record matching elsewhere in the process. Little provision is made for precise control of what records match and what records don’t. Always, there is some degree of inaccuracy in the match.

In other forms of matching, like deterministic matching and rules-based matching, you can very precisely control which records come together and which ones don’t. If something isn’t matching properly, you can make a rule for it. The rules are easy to understand. It’s also very easy to perform forensics on the matching and figure out why two records matched, and that comes in handy should you ever have to explain to anyone exactly why you deduped any given record.

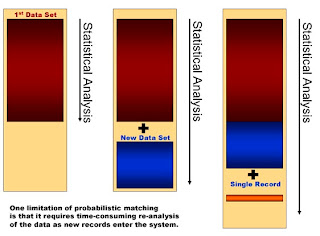

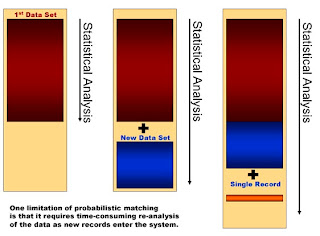

But there is another major folly of probabilistic matching – namely performance. Remember, probabilistic matching relies heavily on statistical analysis of your data. It wants to know how many instances of “John” and “Main Street” are in your data before it can determine if there’s a match.

Consider for a moment a real time implementation, where records are entering the matching system, say once per second. The solution is trying to determine if the new record is almost like a record you already have in your database. For every record entering the system, shouldn’t the solution re-run statistics on the entire data set for the most accurate results? After all, the last new record you accepted into your database is going to change the stats, right? With medium-sized data sets, that’s going to take some time and some significant hardware to accomplish. With large sets of data, forget it.

Many vendors who tout their probabilistic matching secretly have work-arounds for real time matching performance issues. They recommend that you don’t update the statistics for every single new record. Depending on the real-time volumes, you might update statistics nightly or say every 100 records. But it’s safe to say that real time performance is something you’re going to have to deal with if you go with a probabilistic data quality solution.

Better yet, you can stay away from probabilistic matching and take a much less complicated and much more accurate approach – using time-tested pre-built business rules supplemented with your own unique business rules to precisely determine matches.