Managing the access, storage and use of data effectively can provide businesses a competitive advantage. Last year I outlined what the big deal is in big data, as the initial focus on the volume, velocity and variety of data – what my colleague Tony Cosentino calls the three V’s – is only one small piece of how organizations should evaluate this technology.

Managing the access, storage and use of data effectively can provide businesses a competitive advantage. Last year I outlined what the big deal is in big data, as the initial focus on the volume, velocity and variety of data – what my colleague Tony Cosentino calls the three V’s – is only one small piece of how organizations should evaluate this technology. The more balanced approach is to include what he calls the three W’s – the what, so what and now what, which shifts the focus to an outcome-based view that can handle the time–to-value urgency found in business. Big data analytics can help assess the volume of data, while the velocity of data that is potentially in-motion is best handled by what we call operational intelligence. Beyond these, techniques and technology such as predictive analytics and visual discovery facilitate extracting more value from big data. Along with a wide variety of data, these tools help organizations focus on optimizing information assets. We will soon conduct benchmark research into information optimization to determine how organizations are dealing with their information today and what steps they are taking to improve. In-memory computing will surely be one of those steps, as it can significantly improve the time-to-insight equation.

Big data does not magically become valuable, nor is it easily implemented. Organizations must realize they still require good information management competencies that address the full lifecycle of access and integration  across in-house and cloud computing resources. Our research into data in the cloud has found that most organizations are not prepared to handle this broad, distributed data dilemma and has become very important. Technology to harvest and integrate data must become easier to use for both business and IT users, which means overcoming the prevailing reliance on spreadsheets, which our research has found is a larger data challenge than most people realize.

across in-house and cloud computing resources. Our research into data in the cloud has found that most organizations are not prepared to handle this broad, distributed data dilemma and has become very important. Technology to harvest and integrate data must become easier to use for both business and IT users, which means overcoming the prevailing reliance on spreadsheets, which our research has found is a larger data challenge than most people realize.

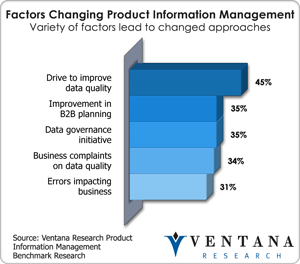

Our research agenda for 2013 calls for us to examine not just the forms of big data technology, but also the impact and value of big data tools that organizations can use to maximize the value of their information and drive better insights. Realizing the vision of greater intelligence across processes and teams takes an investment of time, effort and money. Accomplishing such a feat requires focusing on information competencies to support big data effectively, which requires an assessment of the information processes to deliver data to business effectively. Our research into operational intelligence found that the use of events is a critical part of the big data environment. At the same time the skills of master data management and data governance do not go away, and in fact become important to address the business accuracy question that inevitably pops up when more data becomes available to be utilized. Our research into product information management has found that the drive for data quality is changing organizations’  approaches, and that a comprehensive information strategy using MDM and PIM together across business and IT can yield significant benefits compared to an IT-only approach. Our Product Information Management Value Index found some startling results about which vendors really meet the business needs of organizations. Product information is one of the many priorities as well as customer, employee and finance that need to be part of a big data effort and information optimization set of processes.

approaches, and that a comprehensive information strategy using MDM and PIM together across business and IT can yield significant benefits compared to an IT-only approach. Our Product Information Management Value Index found some startling results about which vendors really meet the business needs of organizations. Product information is one of the many priorities as well as customer, employee and finance that need to be part of a big data effort and information optimization set of processes.

To embrace big data and optimize the use of information across an entire enterprise requires not just competencies and methods to ensure effective deployment, but also the ability to understand the business cycles for information and design the technology accordingly. Our research this year will examine the varying types of big data technology, including data appliances, Hadoop, in-memory computing and RDBMSes, all of which our big data research in 2012 indicated have a nice growth pattern going into 2013. The number of Hadoop tools in particular has expanded dramatically in the last year, making it easier for existing staffers to utilize that technology without having to hire dedicated programmers, as was the case for early adopters.

In addition, we have seen that large-scale in-memory computing architectures can provide significant value when it comes to working with big data. A new generation of data appliances arrived on the scene in 2012. Of course it is critical to explore new methods to provide analytics and gain insights from big data and not assume that your existing providers will provide you a competitive edge with innovative approaches. Also, businesses must not limit themselves to the use of structured data when they can also utilize varying forms of content to help get a more integrated view of information. Big data can become strategic, but businesses need some technical acuity to design the best possible architecture for their needs.

It will be critical to understand best practices for big data in 2013 and not get caught in the neverending cycle of evaluating technology. IT organizations need to deliver value to business iteratively in order to be seen as contributing to the value business expects from technology investments. It is also critical that IT and business analysts work together to find the right big data approach. They must reduce time spent on data-related tasks; our technology innovation benchmark research found that more than 40 percent of organizations currently spend too much. Supporting a variety of data will be critical, as even location data and resulting analytics, which we are researching, is a much larger priority in business than most people in IT realize. It might very well be that businesses must adopt a distributed set of big data technologies to meet their collective needs.

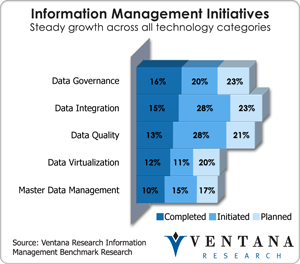

Being efficient in blending big data with existing applications requires good data integration. Our assessment in 2012 found a large selection of vendors in this area, but only some are exploiting the integration points of big data technology and where they might exist in cloud computing environments. Successful data integration might require a deeper examination of data virtualization, which our information management research found to be a growing priority. This year will see a new crop of information and  business applications that exploit the value of big data and are aligned to specific line-of-business needs. At the same time the use of dedicated analytics designed for this approach, which we call big data analytics, will require examination in 2013; my colleague Tony Cosentino has plans for new research on lessons learned and the technology providers in this area, as he outlined in his agenda for 2013.

business applications that exploit the value of big data and are aligned to specific line-of-business needs. At the same time the use of dedicated analytics designed for this approach, which we call big data analytics, will require examination in 2013; my colleague Tony Cosentino has plans for new research on lessons learned and the technology providers in this area, as he outlined in his agenda for 2013.

Businesses must make sure to examine new methods of assembling and harvesting information from existing applications and systems for business, including those that might not be classified as big data but can deliver the value required for business needs. What’s old can still be new, and the role of data integration has never been more important to automate the flow of data in the enterprise. We will research further into data integration in 2013 to determine best practices and build upon our existing Value Index on Data Integration with a new report that highlights the expansion of data integration vendors’ support for big data and cloud computing.

As information becomes potentially more easily managed, organizations need to expand upon traditional business intelligence and look at the role of predictive analytics, which our research has found is essential for optimizing business processes and aiding critical business decisions. We have found the largest obstacle to predictive analytics is difficulty integrating new tools into existing information architectures. For many organizations, our research has found getting the basics in business analytics requires a dedicated approach. With the commoditization of hardware and memory and the concomitant increased computing potential, as well as the emergence of cloud computing  options, these new methods for taking advantage of big data become more cost-effective for a spectrum of small and big businesses.

options, these new methods for taking advantage of big data become more cost-effective for a spectrum of small and big businesses.

All of these are exciting advancements in the science of information management, and CIOs should make a point of learning about and investing in all of them. Being more intelligent with big data is the mantra for 2013. Organizations that heed lessons learned and research the right path forward will reduce their risk of not delivering the value their businesses demand. While a big portion of the technology sector attaches itself to big data, being pragmatic and assessing the right path forward will be the most important best practice for 2013.

Come read and download the full research agenda.

Regards,

Mark Smith

CEO & Chief Research Officer ![]()